Monitoring and Evaluation for learning and performance improvement

Monitoring and Evaluation (M&E) is a continuous management function to assess if progress is made in achieving expected results, to spot bottlenecks in implementation and to highlight whether there are any unintended effects (positive or negative) from an investment plan, programme or project (“project/plan”) and its activities.

The processes of planning, monitoring and evaluation make up the Result-Based Management (RBM) approach, which is intended to aid decision-making towards explicit goals (see RBM). Planning helps to focus on results that matter, while M&E facilitates learning from past successes and challenges and those encountered during implementation.

Elements of an M&E system – which if developed together with all key stakeholders will encourage participation and increased ownership of a project/plan - are: (a) Result Frameworks or logframes (“RF”), which are tools to organize intended results, i.e. measurable development changes. RFs inform the development of the M&E plan and both must be consistent with each other (see RBM); (b) the M&E plan, which contains a description of the functions required to gather the relevant data on the set indicators and the required methods and tools to do so. The M&E plan is used to systematically organize the collection of specific data to be assessed, indicating roles and responsibilities of project/plan stakeholders. It ensures that relevant progress and performance information is collected processed and analyzed on a regular basis to allow for real-time, evidence-based decision-making; (c) the various processes and methods for monitoring (such as regular input and output data gathering and review, participatory monitoring, process monitoring) and for evaluation (including impact evaluation and thematic, surveys, economic analysis of efficiency (see FEA); and (d) the Management Information System, which is an organized repository of data (often georeferenced) to assist managing key numeric information related to the project/plan and the analysis.

Refresher of RBM terminology: Impact = improvement in people’s lives - long-term widespread improvement in society Deliverables: Outputs = capital goods, products and services produced |

Components of M&E system

An M&E system refers to all the functions required to measure a project/plan progress and to assess the achievement of its results. The system is usually composed of a set of results, measured by indicators (together called the result framework) through monitoring tools and a manual that describes the roles and responsibilities related to its functioning.

- Monitoring is a continuous process by which stakeholders obtain regular feedback on progress towards achieving the set milestones and results (often focusing more on process, activities, inputs and outputs).

- Evaluation is the periodic review of the results of a project/plan (typically carried out at mid-term or at completion) towards its outcomes, development goals and impact (see Impact Evaluation).

Both monitoring and evaluation processes enhance the effectiveness of project/plan implementation and contribute to its ongoing revision and update. These processes also promote accountability, where implementers have clearly defined responsibilities, roles and performance expectations, including the prudent use of resources. For public sector managers and policy-makers for example, it includes accountability is to taxpayers and citizens. Through systematic collection of information, the M&E systems contribute also to provide evidence for the mid-term and the completion results assessments as well as beneficiary-level impact analysis. M&E also enhances learning and encourages innovation to achieve better results and contribute to scaling up of projects.

M&E considerations at design stage

The design of an M&E system should begin at the same time as overall project preparation. As a general rule, the M&E system should be designed in close partnership with all relevant stakeholders as it contributes to ensuring that the project/plan objectives and targets, and how they will be measured are well understood and shared. This understanding can then potentially facilitate the establishment of new institutions to take on the M&E role. Adequate resources need to be allocated for implementation of M&E.

Budgets for M&E-related activities lie between 2-5% of the overall project budget, as a rough rule of thumb. When designing the initial budget, M&E expenditure should be distinct from other management costs and should provide detailed budget items for staffing, training, technical assistance, surveys and studies, workshops and equipment, allowances for participatory stakeholder’s consultations, communication and publication. It should be remembered that often projects are essentially large scale experiments. M&E expenditures are essential to learn necessary lessons also for future policies and programmes. This will translate into considerable savings for government budgets and investments if the analysis is done well and based on evidence. |

A successful M&E system must allocate the following:

- sufficient budget (for information management, participatory monitoring activities, field visits, surveys, etc.);

- sufficient time (for a start-up phase that is long enough to establish the M&E system, conduct a baseline survey, train staff and partners, include primary stakeholders in M&E, monitor and reflect);

- sufficient capacity and expertise (to support M&E development, skilled and well-trained people required for good quality data collection and analysis) for M&E. If appropriate, external expertise in design for a baseline study and an impact evaluation should be engaged;

- sufficient flexibility in project design enabling the M&E system to influence the project strategy during implementation.

M&E considerations at implementation stage

Good practice of M&E during implementation requires that result indicators and target values have been well-defined and agreed upon in the result framework (see RBM). It is essential to establish a clear distinction at project design stage between outputs, outcomes and other higher level development objectives. This will ensure that selected indicators are appropriate to their respective level along the results chain and also help determine institutional responsibilities and timelines for M&E.

For each selected indicator, M&E tools (means of verification) have to be defined. Examples are semi-structured interviews; focus group discussions; surveys and questionnaires; regular workshops and roundtables with stakeholders; field monitoring visits; testimonials; and scorecards. Frequency and responsibilities for applying the tools, for analysing relevant information and for reviewing this information must be specified in an M&E plan.

Monitoring, review and evaluation

Monitoring, review and evaluation all deal with collection, analysis and use of information to enable decisions to be made. There is some overlap and all are concerned with systematic learning, but broadly, the three processes can be distinguished as shown in Figure 1. Each type of data collection has defined reporting templates and essential data is consistently incorporated into a management information system (MIS, see below).

Figure 1: Comparison of monitoring, review and evaluation

| Monitoring | Review | Evaluation |

When? | On-going | Regular periodic - often six monthly or annually (see Implementation support) | Periodic - mid-term, completion and ex post |

Why? | To check progress, take remedial actions when needed | To review progress and adjust implementation strategy as needed | To learn broad lessons applicable to other programmes/ projects and as an input to policy review. |

Monitoring types can further be broken down as follows:

- Progress/implementation monitoring of human, financial and physical resources. Frequency: updated monthly and reported quarterly and annually.

- Process monitoring, to support regular progress/implementation monitoring and to assess institutional changes and relationships in order to rapidly identify management responses to upcoming queries. Frequency: quarterly/half-yearly.

- Results monitoring. Frequency: continuous.

Continuous / basic monitoring as well as evaluation efforts can be complemented, as needed by thematic, diagnostic and case studies, to enhance implementation tactics and/or devise more effective strategies.

On special occasions such as mid-term review or preparation and evaluation in relation to new phases, each project has the opportunity to change course if necessary. Ideally some kind of rapid impact assessment is also carried out at such times, bearing in mind that some impacts need a long time before they can be recorded.

Baseline study and related impact evaluation

Note that a project may ideally have three different baselines, namely:

|

Conducting a baseline is a necessary reference point to assess change. A baseline study defines the benchmarks against which project progress is to be measured. Depending upon the results indicators of the project, the baseline study may record food security characteristics, incomes and basic household assets and services, environmental parameters and/or productivity and profitability levels. Subsequent mid-term, end-of-project and impact assessments measure key outcomes and impacts with respect to changes from the baseline (see Impact Evaluation).

Participatory monitoring and evaluation

Participatory M&E is about involving participants directly in the M&E process. It can add value in two ways: ensuring that relevant information and experience is gathered from those who are immediately affected by the project, and increasing accountability to these participants who have a direct interest in implementation success. The process of participation further increases ownership of the activities and the likelihood of replication and sustainability. Special efforts need to be made to incorporate stakeholders at all levels to ensure that they contribute to and benefit from knowledge-sharing.

M&E for learning and performance improvement

M&E systems will only add value to project implementation through interpretation and analysis, by drawing on information from other sources and adapting it for use by project decision makers and a range of key partners. Knowledge generated by the M&E efforts should never stop at basic capturing of information or relying exclusively on quantitative indicators, but also to address the “why” questions. Here the importance of more qualitative and participatory approaches become particularly important, to analyze relationship between project activities and results. Evaluation therefore serves the purpose to establish attribution and causality, and forms a basis for accountability and learning by staff, management and clients. The challenge early on during project implementation is to design effective learning systems that can underpin management behavior towards results, and come up with strategies to optimize impact.

In this context, learning is defined as formulating responses to identified constraints and implementing them in real time. Useful at project/plan level are knowledge-sharing and learning instruments which can pick up information and analysis from the M&E systems as studies, such as: i) summarized studies and publications on lessons learned; ii) case studies documenting successes and failures; iii) publicity material including newsletters, radio and television programmes; iv) formation of national and regional learning networks; v) periodic meetings and workshops to share knowledge and lessons learned; vi) research-extension liaison or feedback meetings; vii) national and regional study tours; viii) preparation and distribution of technical literature on improved practices; and ix) routine supervision missions, mid-term reviews or evaluations and project completion (end-of-project) reports (see Implementation support).

Reporting and data collection in a management information system

Project interventions in dispersed and often remote locations, diverse implementation units, and collaboration with multiple partners and the complex M&E requirements pose important challenges to project managers and other stakeholders for tracking events on the ground. However, without information on outreach achieved and outputs or services delivered, it would be difficult to decide on appropriate responses, or make needed adjustments and refinements to plans, implementation approaches and strategies. To maintain good oversight and manage information on indicators and systems used for data collection, recording and reporting, projects therefore turn to management information systems (MIS). Whereas all projects use Excel spreadsheets as MIS, once a project reaches a certain size it becomes more convenient to use a web-based solution, with a more complex database system. The MIS and the M&E system complement each other, as the MIS supports:

- day-to-day business processes;

- transaction processing;

- management control;

- budget planning;

- tracking progress against expected targets and thereby comparing performance between different regions where the project is implemented;

- systematic data capture, storage and retrieval;

- flagging implementation issues and alerts;

- communication among stakeholders; and

- accountability to project management and stakeholders with regards to reliable project data.

While the MIS helps to identify output indicators and basic outcome indicators, the M&E system draws from MIS data to assess progress in achievement of results and impact and to support learning and adaptation. Additional inputs for the M&E system to produce the desired information are surveys and other interactions with stakeholders on a periodic or ad hoc basis, such as thematic studies, baseline and impact surveys and stakeholder workshops. These often need to use basic and reliable MIS data to develop survey frameworks, and to assess basic assumptions on scope of outreach etc.

TIPS - The MIS system has to be designed with a view to making decision relevant information available at the right time in the right format. - Managers have to appreciate what MIS can do for the system to work. - The project must be equipped with a minimum technical capacity to oversee the MIS and its functioning and development at all times, as external IT specialists cannot be expected to have the flexibility or insight to the overall M&E requirements. - Good practice methods should be used to minimize time between data collection and data entry. Mobile phones and tablets for example enable real-time performance reporting by implementing partners. - A system of data validation should be put in place to minimize errors in collection and entry. - User-friendly and effective dashboards should be developed at every level of the project. |

Internal and external project responsibilities and capacities required for M&E

As various actors are involved in the implementation of the M&E system, each has specific roles and responsibilities namely: programme implementers and technical officers generate progress reports on the components they manage; the planning unit sets targets for data and information requirements with input from technical specialists and allocates financial resources for the independent or integrated M&E unit; the M&E unit collects the information from implementers and beneficiaries and submits consolidated project reports to main stakeholders (in close coordination with the planning unit); project stakeholders analyse progress made in order to identify issues and opportunities and revise the implementation strategy accordingly.

For the system to work, planning and M&E units must analyse and report in close coordination on results, as well as constraints and bottlenecks, and draw and implement lessons for continuous improvement. Project-internal M&E specialists have to be equipped to lead work related to progress/implementation monitoring. Often the M&E unit takes on the role of guiding detailed project planning and target setting without the clear mandate or responsibility to do so, as planning units are not equipped to do so.

External specialist expertise may be relevant in areas such as:

- design, development, operation and maintenance of project MIS;

- capacity strengthening for M&E in general (statistical capacity, etc.);

- process monitoring;

- results monitoring and sample-based validation of findings of progress/implementation monitoring;

- thematic studies, including economic analysis (see FEA);

- baseline, mid-term and end-of-project impact studies and reports; and

- design and piloting of participatory M&E tools for institutions, and training.

The implementing unit may hire the services of specialized agencies to undertake these activities. However, the final responsibility to maintain oversight and act on the results of such activities as for MIS development lies clearly with project management.

Key lessons from M&E experience in the field

Experience has shown that M&E components and associated tools by themselves may be implemented mechanically without offering much usefulness for decision-making. These lessons have been translated into practical suggestions to strengthen M&E systems:

- Get management and stakeholders engaged in and assuming ownership of the M&E work. Project management and staff responsibilities must be internalized, to avoid the perception that M&E is a standalone reporting task.

- Strengthen project capacity for planning and M&E, and create a learning culture. Projects are often experimental, and need to maximize lesson-learning for potential scaling up (see Scaling up).

- Use triangulation and mixed, and participatory methods (see Impact evaluation): complementary use of M&E tools and quantitative and qualitative methods is important because no single tool will provide all the information. At times external views and expertise are essential for new critical insights and specialist analysis.

- Build networks and share learning between projects and programmes. Collaboration is critical to rapidly disseminate and access practical knowledge on M&E.

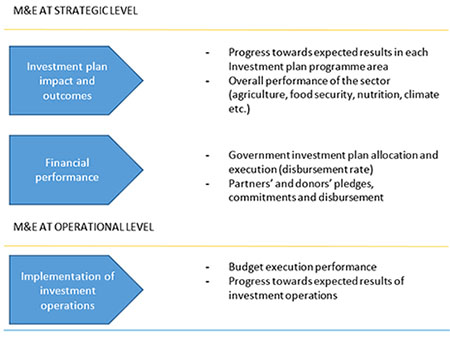

M&E at national investment plan level

M&E of an investment plan (see Investment Plan) needs to be done within a coherent national framework to measure not only the performance of projects and programmes aligned to the investment plan, but also the general performance of the agriculture sector as a whole. Monitoring in this context is the ongoing process by which investment plan stakeholders obtain regular feedback on the progress being made towards achieving the envisaged results. Evaluation aims at assessing the deeper level changes of the investment plan (on the sector or the entire economy).

An investment plan is typically composed of a set of investment operations (programmes or projects). When setting up the M&E framework it is assumed that implementation of each project contributes to the achievement of the associated programme and investment plan component results. Respective indicators therefore need to establish coherent linkages between investment plan components and individual projects listed under the investment plan (through programmes covering several projects).

Since these investment plans target the national level, results should be measured by at least some indicators that can be tracked from national agricultural statistics. As a general rule, M&E data should be made available from authoritative national institutions, bearing in mind that existing data may need to be adapted or upgraded to the requirements of the monitoring exercise. An example of a government unit responsible for collecting and compiling regular M&E reports is the food security monitoring unit that exists in several countries. The figure below shows the elements of M&E frameworks for investment plans at national level.

Figure: The elements of an M&E framework for an investment plan at national level

Gathering information at the results level of each investment plan component facilitates oversight of the technical and financial performance of projects, programmes and components [this is operational M&E]. It is particularly important to monitor the financial progress of ongoing investment projects because investment plans are often not fully financed from the start and can therefore serve as a tool to mobilize resources. Regular analysis of budgetary allocations and disbursement is necessary, as well as monitoring and updating the gaps between them and recording existing financial commitments [this is strategic M&E].

Critical reflection on and strategic communication of M&E findings are fundamental aspects of the results-based management approach, especially given that investment plan assessments provide the evidential basis for building consensus and raising awareness between stakeholders. The assessments also generate lessons to inform and influence future sector and country assistance strategy planning and/or revision.

An example of regular monitoring of a national investment plan can be found in Bangladesh. Since its adoption, the national authorities have produced annual reports covering the entire national policy and investment framework. They are reporting on progress towards national food security and agriculture targets as well as on the financial commitment and disbursement. The relevant material can be found on the Food Planning and Monitoring Unit of the Ministry of Food’s website.

With regards to evaluation, there have been various initiatives to quantify the impact of the Comprehensive Africa Agriculture Development Programme on e.g. agricultural expenditure, agricultural value-added, land and labor productivity, income, and nutrition. This is always done using country-level data (International Food Policy Research Institute discussion paper).

TIPS - It is crucial to have well-funded task forces at investment plan level and well-functioning M&E systems at project and programme levels to develop a good M&E system for a sector and make it operational. - For good functioning of M&E systems at investment plan level, it is advisable to keep them as simple as possible, mainstreamed in the existing national system and with a limited number of indicators. - Monitoring the financial dimension is critical (disbursement rate, but also updates on financial gap). In some cases, Public Expenditure Reviews can be a good complementing piece of information to a Mid-Term Review in order to analyze progress (see Plan Strategically). |

Key Resources

A guide for project M&E: 2.1 An Overview of Using M&E to Manage Impact (IFAD) | Useful guide on how to use Managing for Impact under the frame of M&E, using 4 elements: guiding the project strategy for poverty impact; creating a learning environment; ensuring effective operations; developing and using the M&E System. |

Ten Steps to a results-based Monitoring and Evaluation System (Word Bank, 2004) | A guide on how to design and construct a results-based M&E system in the public sector. The handbook focuses on a comprehensive ten-step model that will help guide the user through the process of designing and building a results-based M&E system. |

Provides background on typology of indicators, describes how indicators are developed and applied in all project phases; provides examples of performance indicators and shows how the indicators were developed on the basis of each project's development objectives. | |

The use of monitoring and evaluation in agriculture and rural development projects (FAO, 2010) | Provides overview of problems of putting M&E into practice and identifies absence of clearly identifiable monitorable indicators and a lack of ownership and participation by the stakeholders as main weaknesses. Includes also guiding principles for result-oriented project M&E systems as a result of the review. |

Climate-Smart Agriculture Sourcebook - Module 18 (FAO, 2013) | Presents an overview of important climate change-related assessment, monitoring and evaluation activities in policy and programme processes and project cycles. Details are provided about how to conduct assessments relating to policies and project justification and design, as well as monitoring and evaluation. |

Stocktaking of M&E and Management Information Systems (FAO, 2012) | Key points about which ME&L approaches, methodologies and processes best serve projects in achieving results, as well as how to combine MIS and ME&L systems to ensure their usefulness for project management. |

This Module provides a detailed description of the methodology and procedures involved in the phase of formulation and evaluation of small-scale community or family investment projects in rural areas. | |

Project Planning and Management-C134 Unit Ten: Monitoring and Evaluation (SOAS, 2013)* | Provides guidance to aid the design and implementation of effective project M&E. Emphasizes the involvement of stakeholders in design and implementation and discusses how to create a learning environment for managers and for project implementation. |

Introduces participatory M&E and explores the potential benefits of PM&E for local governance, for key actors, and for multi-stakeholder processes. It sets out operational guidelines for introducing and embedding PM&E into World Bank activities and is illustrated with examples from practice. | |

Tracking results in agriculture and rural development in less-than-ideal conditions (GDPRD, 2008) | Focuses on M&E of national agriculture and rural development plans and includes broad indicators. Also refers to cases where countries established national M&E systems. |

*These documents are Unit chapters from a postgraduate distance learning module – P534 Project Planning and Management - produced by the Centre for Development, Environment and Policy of SOAS, University of London. The whole module, including study of the role of projects in development and financial and economic cost-benefit analysis, is available for study as an Individual Professional Award for professional update, or as an elective in postgraduate degree programmes in the fields of Agricultural Economics, Poverty Reduction and Sustainable Development, offered by the University of London. For more information see: http://www.soas.ac.uk/cedep/

These documents are made available under a Creative Commons ‘Attribution - Non Commercial – No Derivatives 4.0 International licence’ (CC BY-NC-ND 4.0).